Migrating PyTorch deep learning workloads to the Ascend ecosystem

Date: 2026-05-01

The notebook source code for this article is available on GitHub.

PyTorch is an open source deep learning framework in Python originally developed by Meta, now hosted under the vendor-neutral PyTorch Foundation. It is the de-facto industry standard for training modern deep learning models and large language models (LLMs). Like most other deep learning frameworks, PyTorch natively supports accelerating machine learning workloads on NVIDIA GPUs through its CUDA ecosystem without the need for special plugins or adapters.

The torch-npu plugin developed by the Huawei Ascend community enables AI/ML engineers to migrate machine learning workloads from NVIDIA GPUs to Ascend NPUs seamlessly with minimal changes to existing code and processes. With the high-level model training logic in PyTorch unchanged, the CANN kernels library is used in place of CUDA and Ascend NPUs are used for hardware acceleration in place of NVIDIA GPUs.

This notebook experiment serves as a gentle introduction to migrating deep learning models written in PyTorch to the Ascend ecosystem, using the Fashion MNIST dataset as our motivating example.

Prerequisites

The content in this notebook experiment builds upon the first 6 chapters of Dive into Deep Learning, also known as D2L.

Python version and dependencies

This notebook experiment runs on Python 3.11 with the following Python package dependencies.

- PyTorch 2.8.0

- TorchVision 0.23.0

- PyYAML 6.0.3

- setuptools 82.0.1

torch-npu2.8.0- CANN 8.5.0

- Matplotlib 3.10.9

- ONNX 1.21.0

onnxscript0.7.0

!cat requirements.txt

absl-py==2.4.0

attrs==25.4.0

cloudpickle==3.1.2

decorator==5.2.1

jupyterlab==4.5.6

jupyterlab-git==0.52.0

jupyter-resource-usage==1.2.0

matplotlib==3.10.9

ml-dtypes==0.5.4

onnx==1.21.0

onnxscript==0.7.0

torch==2.8.0

torch-npu==2.8.0

pyyaml==6.0.3

scipy==1.17.1

setuptools==82.0.1

sympy==1.14.0

torchvision==0.23.0

tornado==6.5.5

%pip install -r requirements.txt

Requirement already satisfied: absl-py==2.4.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 1)) (2.4.0)

Requirement already satisfied: attrs==25.4.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 2)) (25.4.0)

Requirement already satisfied: cloudpickle==3.1.2 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 3)) (3.1.2)

Requirement already satisfied: decorator==5.2.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 4)) (5.2.1)

Requirement already satisfied: jupyterlab==4.5.6 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 5)) (4.5.6)

Requirement already satisfied: jupyterlab-git==0.52.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 6)) (0.52.0)

Requirement already satisfied: jupyter-resource-usage==1.2.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 7)) (1.2.0)

Requirement already satisfied: matplotlib==3.10.9 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 8)) (3.10.9)

Requirement already satisfied: ml-dtypes==0.5.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 9)) (0.5.4)

Requirement already satisfied: onnx==1.21.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 10)) (1.21.0)

Requirement already satisfied: onnxscript==0.7.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 11)) (0.7.0)

Requirement already satisfied: torch==2.8.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 12)) (2.8.0)

Requirement already satisfied: torch-npu==2.8.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 13)) (2.8.0)

Requirement already satisfied: pyyaml==6.0.3 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 14)) (6.0.3)

Requirement already satisfied: scipy==1.17.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 15)) (1.17.1)

Requirement already satisfied: setuptools==82.0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 16)) (82.0.1)

Requirement already satisfied: sympy==1.14.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 17)) (1.14.0)

Requirement already satisfied: torchvision==0.23.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 18)) (0.23.0)

Requirement already satisfied: tornado==6.5.5 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from -r requirements.txt (line 19)) (6.5.5)

Requirement already satisfied: async-lru>=1.0.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.3.0)

Requirement already satisfied: httpx<1,>=0.25.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.28.1)

Requirement already satisfied: ipykernel!=6.30.0,>=6.5.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (7.2.0)

Requirement already satisfied: jinja2>=3.0.3 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.1.6)

Requirement already satisfied: jupyter-core in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (5.9.1)

Requirement already satisfied: jupyter-lsp>=2.0.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.3.1)

Requirement already satisfied: jupyter-server<3,>=2.4.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.17.0)

Requirement already satisfied: jupyterlab-server<3,>=2.28.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.28.0)

Requirement already satisfied: notebook-shim>=0.2 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.2.4)

Requirement already satisfied: packaging in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (26.2)

Requirement already satisfied: traitlets in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab==4.5.6->-r requirements.txt (line 5)) (5.14.3)

Requirement already satisfied: nbdime~=4.0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (4.0.4)

Requirement already satisfied: nbformat in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (5.10.4)

Requirement already satisfied: pexpect in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (4.9.0)

Requirement already satisfied: prometheus-client in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-resource-usage==1.2.0->-r requirements.txt (line 7)) (0.25.0)

Requirement already satisfied: psutil>=5.6 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-resource-usage==1.2.0->-r requirements.txt (line 7)) (7.2.2)

Requirement already satisfied: pyzmq>=19 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-resource-usage==1.2.0->-r requirements.txt (line 7)) (27.1.0)

Requirement already satisfied: contourpy>=1.0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (1.3.3)

Requirement already satisfied: cycler>=0.10 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (0.12.1)

Requirement already satisfied: fonttools>=4.22.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (4.62.1)

Requirement already satisfied: kiwisolver>=1.3.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (1.5.0)

Requirement already satisfied: numpy>=1.23 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (2.4.4)

Requirement already satisfied: pillow>=8 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (12.2.0)

Requirement already satisfied: pyparsing>=3 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (3.3.2)

Requirement already satisfied: python-dateutil>=2.7 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from matplotlib==3.10.9->-r requirements.txt (line 8)) (2.9.0.post0)

Requirement already satisfied: protobuf>=4.25.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from onnx==1.21.0->-r requirements.txt (line 10)) (7.34.1)

Requirement already satisfied: typing_extensions>=4.7.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from onnx==1.21.0->-r requirements.txt (line 10)) (4.15.0)

Requirement already satisfied: onnx_ir<2,>=0.1.16 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from onnxscript==0.7.0->-r requirements.txt (line 11)) (0.2.1)

Requirement already satisfied: filelock in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from torch==2.8.0->-r requirements.txt (line 12)) (3.29.0)

Requirement already satisfied: networkx in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from torch==2.8.0->-r requirements.txt (line 12)) (3.6.1)

Requirement already satisfied: fsspec in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from torch==2.8.0->-r requirements.txt (line 12)) (2026.3.0)

Requirement already satisfied: mpmath<1.4,>=1.1.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from sympy==1.14.0->-r requirements.txt (line 17)) (1.3.0)

Requirement already satisfied: anyio in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from httpx<1,>=0.25.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (4.13.0)

Requirement already satisfied: certifi in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from httpx<1,>=0.25.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2026.4.22)

Requirement already satisfied: httpcore==1.* in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from httpx<1,>=0.25.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.0.9)

Requirement already satisfied: idna in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from httpx<1,>=0.25.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.13)

Requirement already satisfied: h11>=0.16 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from httpcore==1.*->httpx<1,>=0.25.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.16.0)

Requirement already satisfied: comm>=0.1.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.2.3)

Requirement already satisfied: debugpy>=1.6.5 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.8.20)

Requirement already satisfied: ipython>=7.23.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (9.13.0)

Requirement already satisfied: jupyter-client>=8.8.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (8.8.0)

Requirement already satisfied: matplotlib-inline>=0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.2.1)

Requirement already satisfied: nest-asyncio>=1.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.6.0)

Requirement already satisfied: MarkupSafe>=2.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jinja2>=3.0.3->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.0.3)

Requirement already satisfied: platformdirs>=2.5 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-core->jupyterlab==4.5.6->-r requirements.txt (line 5)) (4.9.6)

Requirement already satisfied: argon2-cffi>=21.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (25.1.0)

Requirement already satisfied: jupyter-events>=0.11.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.12.1)

Requirement already satisfied: jupyter-server-terminals>=0.4.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.5.4)

Requirement already satisfied: nbconvert>=6.4.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (7.17.1)

Requirement already satisfied: overrides>=5.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (7.7.0)

Requirement already satisfied: send2trash>=1.8.2 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.1.0)

Requirement already satisfied: terminado>=0.8.3 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.18.1)

Requirement already satisfied: websocket-client>=1.7 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.9.0)

Requirement already satisfied: babel>=2.10 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.18.0)

Requirement already satisfied: json5>=0.9.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.14.0)

Requirement already satisfied: jsonschema>=4.18.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (4.26.0)

Requirement already satisfied: requests>=2.31 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.33.1)

Requirement already satisfied: colorama in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbdime~=4.0.1->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (0.4.6)

Requirement already satisfied: gitpython!=2.1.4,!=2.1.5,!=2.1.6 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbdime~=4.0.1->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (3.1.47)

Requirement already satisfied: pygments in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbdime~=4.0.1->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (2.20.0)

Requirement already satisfied: fastjsonschema>=2.15 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbformat->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (2.21.2)

Requirement already satisfied: six>=1.5 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from python-dateutil>=2.7->matplotlib==3.10.9->-r requirements.txt (line 8)) (1.17.0)

Requirement already satisfied: ptyprocess>=0.5 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from pexpect->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (0.7.0)

Requirement already satisfied: argon2-cffi-bindings in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from argon2-cffi>=21.1->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (25.1.0)

Requirement already satisfied: gitdb<5,>=4.0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from gitpython!=2.1.4,!=2.1.5,!=2.1.6->nbdime~=4.0.1->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (4.0.12)

Requirement already satisfied: ipython-pygments-lexers>=1.0.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.1.1)

Requirement already satisfied: jedi>=0.18.2 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.19.2)

Requirement already satisfied: prompt_toolkit<3.1.0,>=3.0.41 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.0.52)

Requirement already satisfied: stack_data>=0.6.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.6.3)

Requirement already satisfied: jsonschema-specifications>=2023.03.6 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema>=4.18.0->jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2025.9.1)

Requirement already satisfied: referencing>=0.28.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema>=4.18.0->jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.37.0)

Requirement already satisfied: rpds-py>=0.25.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema>=4.18.0->jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.30.0)

Requirement already satisfied: python-json-logger>=2.0.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (4.1.0)

Requirement already satisfied: rfc3339-validator in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.1.4)

Requirement already satisfied: rfc3986-validator>=0.1.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.1.1)

Requirement already satisfied: beautifulsoup4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (4.14.3)

Requirement already satisfied: bleach!=5.0.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from bleach[css]!=5.0.0->nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (6.3.0)

Requirement already satisfied: defusedxml in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.7.1)

Requirement already satisfied: jupyterlab-pygments in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.3.0)

Requirement already satisfied: mistune<4,>=2.0.3 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.2.0)

Requirement already satisfied: nbclient>=0.5.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.10.4)

Requirement already satisfied: pandocfilters>=1.4.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.5.1)

Requirement already satisfied: charset_normalizer<4,>=2 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from requests>=2.31->jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.4.7)

Requirement already satisfied: urllib3<3,>=1.26 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from requests>=2.31->jupyterlab-server<3,>=2.28.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.6.3)

Requirement already satisfied: webencodings in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from bleach!=5.0.0->bleach[css]!=5.0.0->nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.5.1)

Requirement already satisfied: tinycss2<1.5,>=1.1.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from bleach[css]!=5.0.0->nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.4.0)

Requirement already satisfied: smmap<6,>=3.0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from gitdb<5,>=4.0.1->gitpython!=2.1.4,!=2.1.5,!=2.1.6->nbdime~=4.0.1->jupyterlab-git==0.52.0->-r requirements.txt (line 6)) (5.0.3)

Requirement already satisfied: parso<0.9.0,>=0.8.4 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jedi>=0.18.2->ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.8.6)

Requirement already satisfied: fqdn in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.5.1)

Requirement already satisfied: isoduration in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (20.11.0)

Requirement already satisfied: jsonpointer>1.13 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.1.1)

Requirement already satisfied: rfc3987-syntax>=1.1.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.1.0)

Requirement already satisfied: uri-template in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.3.0)

Requirement already satisfied: webcolors>=24.6.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (25.10.0)

Requirement already satisfied: wcwidth in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from prompt_toolkit<3.1.0,>=3.0.41->ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.6.0)

Requirement already satisfied: executing>=1.2.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from stack_data>=0.6.0->ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.2.1)

Requirement already satisfied: asttokens>=2.1.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from stack_data>=0.6.0->ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.0.1)

Requirement already satisfied: pure-eval in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from stack_data>=0.6.0->ipython>=7.23.1->ipykernel!=6.30.0,>=6.5.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (0.2.3)

Requirement already satisfied: cffi>=1.0.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from argon2-cffi-bindings->argon2-cffi>=21.1->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.0.0)

Requirement already satisfied: soupsieve>=1.6.1 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from beautifulsoup4->nbconvert>=6.4.4->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2.8.3)

Requirement already satisfied: pycparser in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from cffi>=1.0.1->argon2-cffi-bindings->argon2-cffi>=21.1->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (3.0)

Requirement already satisfied: lark>=1.2.2 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from rfc3987-syntax>=1.1.0->jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.3.1)

Requirement already satisfied: arrow>=0.15.0 in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from isoduration->jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (1.4.0)

Requirement already satisfied: tzdata in /home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages (from arrow>=0.15.0->isoduration->jsonschema[format-nongpl]>=4.18.0->jupyter-events>=0.11.0->jupyter-server<3,>=2.4.0->jupyterlab==4.5.6->-r requirements.txt (line 5)) (2026.2)

[1m[[0m[34;49mnotice[0m[1;39;49m][0m[39;49m A new release of pip is available: [0m[31;49m24.0[0m[39;49m -> [0m[32;49m26.1[0m

[1m[[0m[34;49mnotice[0m[1;39;49m][0m[39;49m To update, run: [0m[32;49mpython -m pip install --upgrade pip[0m

Note: you may need to restart the kernel to use updated packages.

Confirming that NPU acceleration is available

The torch.npu.is_available method reports whether Ascend NPU acceleration is available.

import torch

import torch_npu

torch.npu.is_available()

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/utils/collect_env.py:58: UserWarning: Warning: The /usr/local/Ascend/cann-8.5.0 owner does not match the current owner.

warnings.warn(f"Warning: The {path} owner does not match the current owner.")

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/utils/collect_env.py:58: UserWarning: Warning: The /usr/local/Ascend/cann-8.5.0/aarch64-linux/ascend_toolkit_install.info owner does not match the current owner.

warnings.warn(f"Warning: The {path} owner does not match the current owner.")

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/utils/collect_env.py:58: UserWarning: Warning: The /usr/local/Ascend/cann-8.5.0 owner does not match the current owner.

warnings.warn(f"Warning: The {path} owner does not match the current owner.")

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/utils/collect_env.py:58: UserWarning: Warning: The /usr/local/Ascend/cann-8.5.0/aarch64-linux/ascend_toolkit_install.info owner does not match the current owner.

warnings.warn(f"Warning: The {path} owner does not match the current owner.")

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/__init__.py:309: UserWarning: On the interactive interface, the value of TASK_QUEUE_ENABLE is set to 0 by default. Do not set it to 1 to prevent some unknown errors

warnings.warn("On the interactive interface, the value of TASK_QUEUE_ENABLE is set to 0 by default. \

True

Warnings can be safely ignored unless you see messages starting with [ERROR] or [CRITICAL] in which case consult the Ascend forum for assistance.

As shown in the output above, NPU acceleration is available on our OrangePi AIpro (20T) development board which includes a single Ascend 310B1 NPU chip and core.

We can also get the number of available NPUs with torch.npu.device_count and the current device ID with torch.npu.current_device.

torch.npu.device_count()

1

torch.npu.current_device()

0

Our OrangePi AIpro (20T) development board has a single NPU chip and core so PyTorch returns 1 for the device count and 0 for the current device ID as expected.

Let’s verify the results from PyTorch with the npu-smi command-line utility.

!npu-smi info

+--------------------------------------------------------------------------------------------------------+

| npu-smi 23.0.0 Version: 23.0.0 |

+-------------------------------+-----------------+------------------------------------------------------+

| NPU Name | Health | Power(W) Temp(C) Hugepages-Usage(page) |

| Chip Device | Bus-Id | AICore(%) Memory-Usage(MB) |

+===============================+=================+======================================================+

| 0 310B1 | Alarm | 0.0 53 24 / 24 |

| 0 0 | NA | 0 7630 / 23673 |

+===============================+=================+======================================================+

As reported above, we have a single Ascend 310B1 NPU chip and core on our development board.

Copying tensors to device memory

PyTorch tensors reside in main memory by default. Main memory is also commonly known as random access memory (RAM) or CPU RAM.

Let’s create a $2 \times 2$ matrix with torch.randn and query its device attribute to confirm that our tensor resides in main memory.

A = torch.randn(2, 2)

A, A.device

(tensor([[ 0.0325, 0.2070],

[-0.4967, -1.5113]]),

device(type='cpu'))

The device attribute returns device(type='cpu') which confirms our tensor is residing in main memory. In many cases, we’ll want to copy our tensor to NPU device memory. This allows us to perform computationally intensive operations such as matrix multiplication directly on the NPU device to speed up the model training process.

Let’s use the npu method on our tensor to move it to (NPU) device memory and confirm that the device attribute on our tensor is updated appropriately.

A = A.npu()

A, A.device

[W501 10:43:25.136407353 compiler_depend.ts:164] Warning: Device do not support double dtype now, dtype cast replace with float. (function operator())

.

(tensor([[ 0.0325, 0.2070],

[-0.4967, -1.5113]], device='npu:0'),

device(type='npu', index=0))

The device attribute of our resulting tensor now reports device(type='npu', index=0), confirming that it is now copied to device memory.

Initializing tensors directly to device memory

Instead of creating our tensors in main memory only to copy them to device memory, we can initialize our tensors directly to device memory. This spares us the overhead of copying our data across different devices which poses non-trivial communication overhead.

Use torch.device to construct an object representing our NPU device, then specify it in the device parameter of tensor constructors such as torch.randn. Alternatively, we can specify the device parameter during tensor initialization as the string 'npu:0' directly - both are functionally equivalent.

npu_0 = torch.device('npu:0')

npu_0

device(type='npu', index=0)

M0 = torch.randn(2, 2, device=npu_0)

M1 = torch.randn(2, 2, device='npu:0')

M0, M1, M0.device, M1.device

(tensor([[-0.1831, 0.7490],

[-0.2751, 0.5227]], device='npu:0'),

tensor([[ 0.7776, -1.0241],

[-0.6603, 0.9278]], device='npu:0'),

device(type='npu', index=0),

device(type='npu', index=0))

Let’s multiply the 2 matrices with the @ operator. As both matrices are on the same NPU device with index 0, the product also automatically resides on the same NPU device memory.

M = M0 @ M1

M, M.device

(tensor([[-0.6370, 0.8825],

[-0.5591, 0.7667]], device='npu:0'),

device(type='npu', index=0))

Automatic migration of PyTorch CUDA code to Ascend

Imagine you have a deep learning project written in PyTorch leveraging NVIDIA’s CUDA framework for GPU acceleration. Your codebase may already be riddled with CUDA calls like such: X.cuda()

To migrate your codebase to run on Ascend NPUs, you would normally need to refactor the codebase manually to replace the CUDA calls to npu calls: X.cuda() $\rightarrow$ X.npu(). While this in itself is relatively straightforward, it is nevertheless grunt work producing no business value which you would rather avoid.

Fortunately, the torch_npu.contrib module provides the transfer_to_npu submodule which you can import by adding a single line to your existing codebase. It automatically intercepts CUDA calls and converts them to NPU calls under the hood.

Let’s see it in action!

from torch_npu.contrib import transfer_to_npu

torch.cuda.is_available()

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/contrib/transfer_to_npu.py:347: ImportWarning:

*************************************************************************************************************

The torch.Tensor.cuda and torch.nn.Module.cuda are replaced with torch.Tensor.npu and torch.nn.Module.npu now..

The torch.cuda.DoubleTensor is replaced with torch.npu.FloatTensor cause the double type is not supported now..

The backend in torch.distributed.init_process_group set to hccl now..

The torch.cuda.* and torch.cuda.amp.* are replaced with torch.npu.* and torch.npu.amp.* now..

The device parameters have been replaced with npu in the function below:

torch.logspace, torch.randint, torch.hann_window, torch.rand, torch.full_like, torch.ones_like, torch.rand_like, torch.randperm, torch.arange, torch.frombuffer, torch.normal, torch._empty_per_channel_affine_quantized, torch.empty_strided, torch.empty_like, torch.scalar_tensor, torch.tril_indices, torch.bartlett_window, torch.ones, torch.sparse_coo_tensor, torch.randn, torch.kaiser_window, torch.tensor, torch.triu_indices, torch.as_tensor, torch.zeros, torch.randint_like, torch.full, torch.eye, torch._sparse_csr_tensor_unsafe, torch.empty, torch._sparse_coo_tensor_unsafe, torch.blackman_window, torch.zeros_like, torch.range, torch.sparse_csr_tensor, torch.randn_like, torch.from_file, torch._cudnn_init_dropout_state, torch._empty_affine_quantized, torch.linspace, torch.hamming_window, torch.empty_quantized, torch._pin_memory, torch.load, torch.set_default_device, torch.get_device_module, torch.sparse_compressed_tensor, torch.Tensor.new_empty, torch.Tensor.new_empty_strided, torch.Tensor.new_full, torch.Tensor.new_ones, torch.Tensor.new_tensor, torch.Tensor.new_zeros, torch.Tensor.to, torch.Tensor.pin_memory, torch.nn.Module.to, torch.nn.Module.to_empty

*************************************************************************************************************

warnings.warn(msg, ImportWarning)

/home/HwHiAiUser/.pyenv/versions/3.11.15/envs/orangepiaipro-20t/lib/python3.11/site-packages/torch_npu/contrib/transfer_to_npu.py:276: RuntimeWarning: torch.jit.script and torch.jit.script_method will be disabled by transfer_to_npu, which currently does not support them, if you need to enable them, please do not use transfer_to_npu.

warnings.warn(msg, RuntimeWarning)

True

torch.cuda.device_count(), torch.cuda.current_device()

(1, 0)

gpu_0 = torch.device('cuda:0')

gpu_0

device(type='cuda', index=0)

P0 = torch.randn(2, 2, device=gpu_0)

P1 = torch.randn(2, 2, device='cuda:0')

P0, P1, P0.device, P1.device

(tensor([[-3.4023, -1.1358],

[-1.0057, 0.0248]], device='npu:0'),

tensor([[-0.8602, -0.5356],

[-0.5590, 0.0599]], device='npu:0'),

device(type='npu', index=0),

device(type='npu', index=0))

P = P0 @ P1

P, P.device

(tensor([[3.5616, 1.7543],

[0.8512, 0.5401]], device='npu:0'),

device(type='npu', index=0))

With our PyTorch CUDA codebase seamlessly migrated to Ascend NPU, let’s see a more realistic example in action - training a multilayer perceptron (MLP) model on the Fashion MNIST dataset. We’ll use CUDA calls throughout our motivating example to demonstrate that none of your existing code needs to be manually migrated - what worked on NVIDIA GPUs will continue to work on Ascend NPUs as-is!

Training a deep neural network on the Fashion MNIST dataset

Let’s train a deep neural network on the Fashion MNIST dataset. Before that, let’s load the dataset and visualize a few samples to understand what kind of data we’re working with.

This example is based on the official Training with PyTorch tutorial but we won’t be using convolutional neural networks (CNNs) here since they are covered in chapter 7 of D2L which we haven’t reached yet. Instead, we’ll just use a simple MLP model with multiple fully connected layers and dropout.

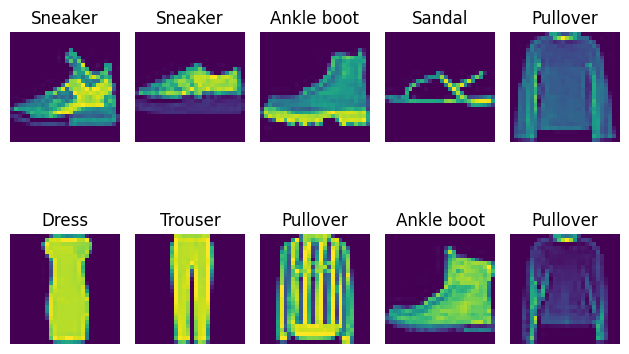

Visualizing the dataset

The torchvision.datasets.FashionMNIST class optionally downloads the dataset if download=True, then loads the dataset as PIL images and applies the transformation(s) defined in the transform parameter.

Let’s set train=True which fetches the training set and apply torchvision.transforms.ToTensor to convert the images to PyTorch tensors.

from torchvision import datasets, transforms

data_dir = 'data/'

visualize_data = datasets.FashionMNIST(root=data_dir,

train=True,

transform=transforms.ToTensor(),

download=True)

Next, we need to load and batch the data with torch.utils.data.DataLoader. Let’s specify a batch size of 10 and take just the first batch, visualizing the images and labels with Matplotlib.

from torch.utils.data import DataLoader

visualize_loader = DataLoader(visualize_data, batch_size=10, shuffle=True)

X_samples, y_samples = next(iter(visualize_loader))

X_samples.shape, y_samples.shape, X_samples.device, y_samples.device

(torch.Size([10, 1, 28, 28]),

torch.Size([10]),

device(type='cpu'),

device(type='cpu'))

Note that the loaded samples reside in main memory. That’s fine since we’re just visualizing the images with Matplotlib - no need to use our Ascend NPU for now.

X_samples, y_samples = X_samples.numpy(), y_samples.numpy()

X_samples = X_samples.transpose(0, 2, 3, 1) # Convert CHW format back to HWC

X_samples.shape, y_samples.shape

((10, 28, 28, 1), (10,))

import matplotlib.pyplot as plt

LABELS = [

'T-shirt/top',

'Trouser',

'Pullover',

'Dress',

'Coat',

'Sandal',

'Shirt',

'Sneaker',

'Bag',

'Ankle boot'

]

fig, axes = plt.subplots(2, 5)

for axis_idx, axis in enumerate(axes.flatten()):

axis.set_title(LABELS[y_samples[axis_idx]])

axis.imshow(X_samples[axis_idx])

axis.axis('off')

fig.tight_layout()

fig.show()

Loading and transforming our dataset

Now we know what our data looks like, let’s load and transform it in a format suitable for training our model.

The images are transformed via the following 3-step process, which is all handled automatically with the torchvision.transforms.ToTensor transformation we saw earlier.

- Resize all images to $28 \times 28$ pixels

- Rescale all channels from integer in range $[0, 255]$ to float in range $[0, 1]$

- Change the shape of each image from format

(height, width, channels)to(channels, height, width)

Unlike MindSpore 2.8.0, PyTorch recommends we keep the labels as class indices without one-hot encoding for optimized computation of the torch.nn.CrossEntropyLoss we’ll see later.

train_data = datasets.FashionMNIST(root=data_dir,

train=True,

transform=transforms.ToTensor())

test_data = datasets.FashionMNIST(root=data_dir,

train=False,

transform=transforms.ToTensor())

For training, we’ll split our data in batches of $2^9=512$ samples. For validation, we’ll use a single batch of $10,000$ samples.

train_loader = DataLoader(train_data, batch_size=512, shuffle=True)

test_loader = DataLoader(test_data, batch_size=10000, shuffle=True)

Defining our neural network, optimizer, activation and loss functions

Here’s the neural network we’ll be using.

- Flatten the images to $28 \times 28 \times 1 = 784$ features

- 1st hidden layer with $784$ input channels and $2^{10}=1024$ output channels, ReLU activation

- 1st dropout layer with $p = 0.3$

- 2nd hidden layer with $1024$ input channels and $2^9=512$ output channels, ReLU activation

- 2nd dropout layer with $p = 0.2$

- Fully connected layer with $512$ input channels and $10$ output channels

We’ll also copy our model to device memory using the to method. Confirm that our resulting model is in device memory by accessing its parameters with the parameters method which returns an iterator.

import torch.nn as nn

model = nn.Sequential(

nn.Flatten(),

nn.Linear(784, 1024),

nn.ReLU(),

nn.Dropout(0.3),

nn.Linear(1024, 512),

nn.ReLU(),

nn.Dropout(0.2),

nn.Linear(512, 10)

).to(gpu_0)

model, next(model.parameters()).device

(Sequential(

(0): Flatten(start_dim=1, end_dim=-1)

(1): Linear(in_features=784, out_features=1024, bias=True)

(2): ReLU()

(3): Dropout(p=0.3, inplace=False)

(4): Linear(in_features=1024, out_features=512, bias=True)

(5): ReLU()

(6): Dropout(p=0.2, inplace=False)

(7): Linear(in_features=512, out_features=10, bias=True)

),

device(type='npu', index=0))

Use torch.nn.CrossEntropyLoss for our loss function. It combines softmax activation and cross-entropy loss under the hood using the LogSumExp trick to prevent numerical underflow and overflow.

criterion = nn.CrossEntropyLoss()

criterion

CrossEntropyLoss()

For the optimizer, use torch.optim.SGD with the following hyperparameters.

- Learning rate:

0.01 - Weight decay:

1e-3 - Momentum:

0.9

import torch.optim as optim

optimizer = optim.SGD(params=model.parameters(), lr=0.01, weight_decay=1e-3, momentum=0.9)

optimizer

SGD (

Parameter Group 0

dampening: 0

differentiable: False

foreach: None

fused: None

lr: 0.01

maximize: False

momentum: 0.9

nesterov: False

weight_decay: 0.001

)

With our neural network, combined activation + loss function, optimizer and hyperparameters defined, it’s time to define our training loop.

Training our neural network

Let’s train our model over 20 epochs. We define the per-batch and per-epoch training logic as below.

def train_batch(X_batch, y_batch):

optimizer.zero_grad()

y_hat = model(X_batch)

loss = criterion(y_hat, y_batch)

loss.backward()

optimizer.step()

return loss.item()

def train_epoch(epoch=0):

training_losses = []

print(f'Epoch {epoch} start')

model.train(True)

batch_count = len(train_loader)

for batch_idx, (X_batch, y_batch) in enumerate(train_loader):

loss = train_batch(X_batch.to(gpu_0), y_batch.to(gpu_0))

if batch_idx % 10 == 0:

print(f'Training loss: {loss:.4f} [{batch_idx}/{batch_count}]')

training_losses.append(loss)

print(f'Epoch {epoch} end')

return training_losses

Define our validation logic at the end of each epoch as well.

def validate_epoch(epoch=0):

model.eval()

validation_loss = None

with torch.no_grad():

X_test, y_test = next(iter(test_loader))

y_hat = model(X_test.to(gpu_0))

validation_loss = criterion(y_hat, y_test.to(gpu_0))

validation_loss = validation_loss.item()

print(f'Validation loss at epoch {epoch}: {validation_loss:.4f}')

return validation_loss

Now train our model using the utility functions defined above.

epochs = 20

training_losses = []

validation_losses = []

for epoch in range(epochs):

training_losses.extend(train_epoch(epoch=epoch))

validation_losses.append(validate_epoch(epoch=epoch))

len(training_losses), len(validation_losses)

Epoch 0 start

/usr/local/Ascend/cann-8.5.0/python/site-packages/tbe/common/context/op_context.py:38: DeprecationWarning: currentThread() is deprecated, use current_thread() instead

return _contexts.setdefault(threading.currentThread().ident, [])

..Training loss: 2.3067 [0/118]

Training loss: 2.2449 [10/118]

Training loss: 2.1330 [20/118]

Training loss: 1.9472 [30/118]

Training loss: 1.6020 [40/118]

Training loss: 1.3151 [50/118]

Training loss: 1.1242 [60/118]

Training loss: 0.9828 [70/118]

Training loss: 0.9193 [80/118]

Training loss: 0.9016 [90/118]

Training loss: 0.8678 [100/118]

Training loss: 0.8293 [110/118]

..Epoch 0 end

Validation loss at epoch 0: 0.7749

Epoch 1 start

Training loss: 0.7629 [0/118]

Training loss: 0.7416 [10/118]

Training loss: 0.7207 [20/118]

Training loss: 0.7641 [30/118]

Training loss: 0.7532 [40/118]

Training loss: 0.7241 [50/118]

Training loss: 0.7039 [60/118]

Training loss: 0.6603 [70/118]

Training loss: 0.7116 [80/118]

Training loss: 0.5626 [90/118]

Training loss: 0.6959 [100/118]

Training loss: 0.6535 [110/118]

Epoch 1 end

Validation loss at epoch 1: 0.6184

Epoch 2 start

Training loss: 0.7138 [0/118]

Training loss: 0.5561 [10/118]

Training loss: 0.5997 [20/118]

Training loss: 0.6026 [30/118]

Training loss: 0.5982 [40/118]

Training loss: 0.5553 [50/118]

Training loss: 0.6269 [60/118]

Training loss: 0.6159 [70/118]

Training loss: 0.6426 [80/118]

Training loss: 0.5798 [90/118]

Training loss: 0.6270 [100/118]

Training loss: 0.6189 [110/118]

Epoch 2 end

Validation loss at epoch 2: 0.5488

Epoch 3 start

Training loss: 0.5850 [0/118]

Training loss: 0.5595 [10/118]

Training loss: 0.5223 [20/118]

Training loss: 0.5472 [30/118]

Training loss: 0.5331 [40/118]

Training loss: 0.5496 [50/118]

Training loss: 0.5380 [60/118]

Training loss: 0.5581 [70/118]

Training loss: 0.5393 [80/118]

Training loss: 0.5286 [90/118]

Training loss: 0.5057 [100/118]

Training loss: 0.5996 [110/118]

Epoch 3 end

Validation loss at epoch 3: 0.5119

Epoch 4 start

Training loss: 0.5086 [0/118]

Training loss: 0.5163 [10/118]

Training loss: 0.5191 [20/118]

Training loss: 0.4908 [30/118]

Training loss: 0.4604 [40/118]

Training loss: 0.5194 [50/118]

Training loss: 0.5331 [60/118]

Training loss: 0.4916 [70/118]

Training loss: 0.5372 [80/118]

Training loss: 0.5035 [90/118]

Training loss: 0.5856 [100/118]

Training loss: 0.4825 [110/118]

Epoch 4 end

Validation loss at epoch 4: 0.4875

Epoch 5 start

Training loss: 0.4477 [0/118]

Training loss: 0.4837 [10/118]

Training loss: 0.4735 [20/118]

Training loss: 0.4469 [30/118]

Training loss: 0.4205 [40/118]

Training loss: 0.4833 [50/118]

Training loss: 0.4881 [60/118]

Training loss: 0.4871 [70/118]

Training loss: 0.4963 [80/118]

Training loss: 0.5154 [90/118]

Training loss: 0.4818 [100/118]

Training loss: 0.4846 [110/118]

Epoch 5 end

Validation loss at epoch 5: 0.4772

Epoch 6 start

Training loss: 0.4566 [0/118]

Training loss: 0.4809 [10/118]

Training loss: 0.4806 [20/118]

Training loss: 0.4843 [30/118]

Training loss: 0.4679 [40/118]

Training loss: 0.4649 [50/118]

Training loss: 0.5032 [60/118]

Training loss: 0.5153 [70/118]

Training loss: 0.4867 [80/118]

Training loss: 0.4000 [90/118]

Training loss: 0.4270 [100/118]

Training loss: 0.4309 [110/118]

Epoch 6 end

Validation loss at epoch 6: 0.4598

Epoch 7 start

Training loss: 0.4656 [0/118]

Training loss: 0.3989 [10/118]

Training loss: 0.4370 [20/118]

Training loss: 0.4929 [30/118]

Training loss: 0.4629 [40/118]

Training loss: 0.4670 [50/118]

Training loss: 0.4424 [60/118]

Training loss: 0.4256 [70/118]

Training loss: 0.4454 [80/118]

Training loss: 0.4492 [90/118]

Training loss: 0.4566 [100/118]

Training loss: 0.4117 [110/118]

Epoch 7 end

Validation loss at epoch 7: 0.4521

Epoch 8 start

Training loss: 0.4429 [0/118]

Training loss: 0.3813 [10/118]

Training loss: 0.4334 [20/118]

Training loss: 0.4130 [30/118]

Training loss: 0.4505 [40/118]

Training loss: 0.3780 [50/118]

Training loss: 0.4415 [60/118]

Training loss: 0.4462 [70/118]

Training loss: 0.4231 [80/118]

Training loss: 0.4326 [90/118]

Training loss: 0.4013 [100/118]

Training loss: 0.3960 [110/118]

Epoch 8 end

Validation loss at epoch 8: 0.4310

Epoch 9 start

Training loss: 0.4525 [0/118]

Training loss: 0.4325 [10/118]

Training loss: 0.4027 [20/118]

Training loss: 0.4604 [30/118]

Training loss: 0.4497 [40/118]

Training loss: 0.3951 [50/118]

Training loss: 0.4039 [60/118]

Training loss: 0.4220 [70/118]

Training loss: 0.4026 [80/118]

Training loss: 0.3540 [90/118]

Training loss: 0.3990 [100/118]

Training loss: 0.4156 [110/118]

Epoch 9 end

Validation loss at epoch 9: 0.4340

Epoch 10 start

Training loss: 0.3414 [0/118]

Training loss: 0.4657 [10/118]

Training loss: 0.4249 [20/118]

Training loss: 0.3894 [30/118]

Training loss: 0.4244 [40/118]

Training loss: 0.3674 [50/118]

Training loss: 0.4154 [60/118]

Training loss: 0.3856 [70/118]

Training loss: 0.4114 [80/118]

Training loss: 0.3649 [90/118]

Training loss: 0.4157 [100/118]

Training loss: 0.4477 [110/118]

Epoch 10 end

Validation loss at epoch 10: 0.4197

Epoch 11 start

Training loss: 0.4068 [0/118]

Training loss: 0.3972 [10/118]

Training loss: 0.3927 [20/118]

Training loss: 0.4212 [30/118]

Training loss: 0.4322 [40/118]

Training loss: 0.4423 [50/118]

Training loss: 0.3696 [60/118]

Training loss: 0.3813 [70/118]

Training loss: 0.4295 [80/118]

Training loss: 0.3709 [90/118]

Training loss: 0.4422 [100/118]

Training loss: 0.3961 [110/118]

Epoch 11 end

Validation loss at epoch 11: 0.4135

Epoch 12 start

Training loss: 0.3441 [0/118]

Training loss: 0.3853 [10/118]

Training loss: 0.4168 [20/118]

Training loss: 0.3616 [30/118]

Training loss: 0.4245 [40/118]

Training loss: 0.4083 [50/118]

Training loss: 0.3639 [60/118]

Training loss: 0.3572 [70/118]

Training loss: 0.3499 [80/118]

Training loss: 0.3522 [90/118]

Training loss: 0.4522 [100/118]

Training loss: 0.4074 [110/118]

Epoch 12 end

Validation loss at epoch 12: 0.4052

Epoch 13 start

Training loss: 0.4312 [0/118]

Training loss: 0.3718 [10/118]

Training loss: 0.3873 [20/118]

Training loss: 0.3425 [30/118]

Training loss: 0.3640 [40/118]

Training loss: 0.3701 [50/118]

Training loss: 0.3879 [60/118]

Training loss: 0.3433 [70/118]

Training loss: 0.4181 [80/118]

Training loss: 0.3484 [90/118]

Training loss: 0.3870 [100/118]

Training loss: 0.3533 [110/118]

Epoch 13 end

Validation loss at epoch 13: 0.3984

Epoch 14 start

Training loss: 0.4387 [0/118]

Training loss: 0.3737 [10/118]

Training loss: 0.3873 [20/118]

Training loss: 0.3481 [30/118]

Training loss: 0.3841 [40/118]

Training loss: 0.3389 [50/118]

Training loss: 0.4040 [60/118]

Training loss: 0.3406 [70/118]

Training loss: 0.3931 [80/118]

Training loss: 0.3658 [90/118]

Training loss: 0.4006 [100/118]

Training loss: 0.3896 [110/118]

Epoch 14 end

Validation loss at epoch 14: 0.3926

Epoch 15 start

Training loss: 0.3780 [0/118]

Training loss: 0.3658 [10/118]

Training loss: 0.3828 [20/118]

Training loss: 0.4214 [30/118]

Training loss: 0.3526 [40/118]

Training loss: 0.3215 [50/118]

Training loss: 0.3454 [60/118]

Training loss: 0.3830 [70/118]

Training loss: 0.4121 [80/118]

Training loss: 0.3796 [90/118]

Training loss: 0.3582 [100/118]

Training loss: 0.3394 [110/118]

Epoch 15 end

Validation loss at epoch 15: 0.3928

Epoch 16 start

Training loss: 0.3898 [0/118]

Training loss: 0.3741 [10/118]

Training loss: 0.3795 [20/118]

Training loss: 0.3703 [30/118]

Training loss: 0.3349 [40/118]

Training loss: 0.4097 [50/118]

Training loss: 0.4256 [60/118]

Training loss: 0.3691 [70/118]

Training loss: 0.3390 [80/118]

Training loss: 0.3891 [90/118]

Training loss: 0.3203 [100/118]

Training loss: 0.3685 [110/118]

Epoch 16 end

Validation loss at epoch 16: 0.3844

Epoch 17 start

Training loss: 0.3162 [0/118]

Training loss: 0.3540 [10/118]

Training loss: 0.3618 [20/118]

Training loss: 0.3056 [30/118]

Training loss: 0.4054 [40/118]

Training loss: 0.3790 [50/118]

Training loss: 0.3294 [60/118]

Training loss: 0.3737 [70/118]

Training loss: 0.3456 [80/118]

Training loss: 0.3492 [90/118]

Training loss: 0.3211 [100/118]

Training loss: 0.3062 [110/118]

Epoch 17 end

Validation loss at epoch 17: 0.3798

Epoch 18 start

Training loss: 0.3132 [0/118]

Training loss: 0.3743 [10/118]

Training loss: 0.3461 [20/118]

Training loss: 0.3777 [30/118]

Training loss: 0.3114 [40/118]

Training loss: 0.3312 [50/118]

Training loss: 0.2937 [60/118]

Training loss: 0.3612 [70/118]

Training loss: 0.4152 [80/118]

Training loss: 0.3434 [90/118]

Training loss: 0.3293 [100/118]

Training loss: 0.3090 [110/118]

Epoch 18 end

Validation loss at epoch 18: 0.3754

Epoch 19 start

Training loss: 0.3349 [0/118]

Training loss: 0.3302 [10/118]

Training loss: 0.2812 [20/118]

Training loss: 0.3731 [30/118]

Training loss: 0.3133 [40/118]

Training loss: 0.3012 [50/118]

Training loss: 0.4048 [60/118]

Training loss: 0.3289 [70/118]

Training loss: 0.3826 [80/118]

Training loss: 0.3694 [90/118]

Training loss: 0.2930 [100/118]

Training loss: 0.3059 [110/118]

Epoch 19 end

Validation loss at epoch 19: 0.3818

(2360, 20)

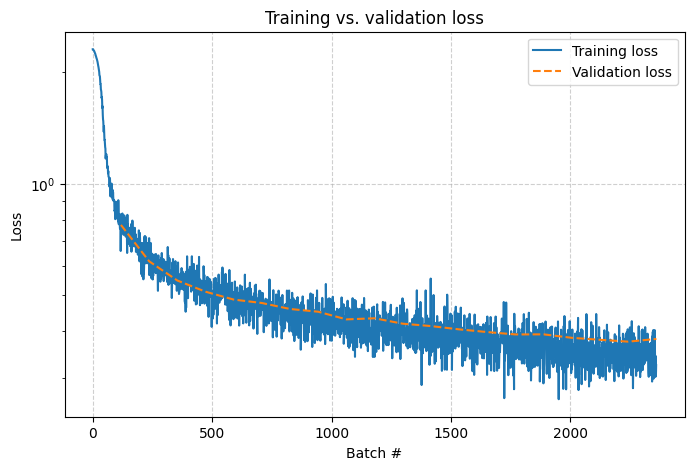

Plot the training and validation losses with Matplotlib.

import numpy as np

batches_per_epoch = 118

batches_x = np.arange(batches_per_epoch * epochs)

training_losses_y = np.array(training_losses)

epochs_x = batches_per_epoch * np.arange(epochs) + batches_per_epoch

validation_losses_y = np.array(validation_losses)

plt.figure(figsize=(8, 5))

plt.plot(batches_x, training_losses_y, label='Training loss', linestyle='-')

plt.plot(epochs_x, validation_losses_y, label='Validation loss', linestyle='--')

plt.title('Training vs. validation loss')

plt.xlabel('Batch #')

plt.ylabel('Loss')

plt.yscale('log')

plt.grid(True, linestyle='--', alpha=0.6)

plt.legend()

plt.show()

Validating the accuracy of our model

Let’s compute the validation loss and accuracy of our trained model.

X_test = None

y_test = None

model.eval()

with torch.no_grad():

X_test, y_test = next(iter(test_loader))

y_hat = model(X_test.to(gpu_0))

y_test = y_test.to(gpu_0)

loss = criterion(y_hat, y_test).item()

y_hat = torch.argmax(y_hat, dim=1)

total = 10000

correct = (y_hat == y_test).sum().item()

accuracy = correct / total

print(f'Loss: {loss:.4f}, Accuracy: {accuracy:.4f}')

.Loss: 0.3818, Accuracy: 0.8632

Our accuracy is around $85 \text{-} 90\%$, a noticeable improvement from our simple linear model. Not bad!

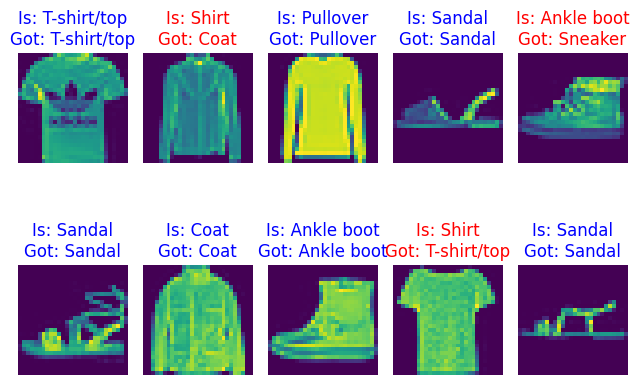

Let’s see it in action - predict labels for 10 images from our validation set and compare the predictions to the actual labels.

X_test_10, y_test_10 = X_test[:10], y_test[:10]

y_hat = model(X_test_10.to(gpu_0))

y_hat = torch.argmax(y_hat, dim=1)

X_test_10 = X_test_10.numpy()

y_hat = y_hat.cpu().numpy()

y_test_10 = y_test_10.cpu().numpy()

fig, axes = plt.subplots(2, 5)

for axis_idx, axis in enumerate(axes.flatten()):

predicted = y_hat[axis_idx]

actual = y_test_10[axis_idx]

correct = (predicted == actual).item()

color = 'blue' if correct else 'red'

axis.set_title(f'Is: {LABELS[actual]}\nGot: {LABELS[predicted]}', color=color)

axis.imshow(X_test_10[axis_idx].transpose(1, 2, 0))

axis.axis('off')

fig.tight_layout()

fig.show()

Exporting our model to ONNX format

With our model trained on the Fashion MNIST dataset with satisfactory accuracy, let’s export it to ONNX format so others can download our model for inference. This is provided in PyTorch as torch.onnx.export.

import os

onnx_program = torch.onnx.export(model.cpu(), torch.from_numpy(X_test_10[:1]), dynamo=True)

onnx_program.save('fashion-mnist-mlp.onnx')

os.path.isfile('fashion-mnist-mlp.onnx')

W0501 10:54:44.200000 25893 torch/onnx/_internal/exporter/_schemas.py:455] Missing annotation for parameter 'input' from (input, rois, spatial_scale: 'float', pooled_height: 'int', pooled_width: 'int', sampling_ratio: 'int' = -1, aligned: 'bool' = False). Treating as an Input.

W0501 10:54:44.203000 25893 torch/onnx/_internal/exporter/_schemas.py:455] Missing annotation for parameter 'rois' from (input, rois, spatial_scale: 'float', pooled_height: 'int', pooled_width: 'int', sampling_ratio: 'int' = -1, aligned: 'bool' = False). Treating as an Input.

W0501 10:54:44.207000 25893 torch/onnx/_internal/exporter/_schemas.py:455] Missing annotation for parameter 'input' from (input, boxes, output_size: 'Sequence[int]', spatial_scale: 'float' = 1.0). Treating as an Input.

W0501 10:54:44.213000 25893 torch/onnx/_internal/exporter/_schemas.py:455] Missing annotation for parameter 'boxes' from (input, boxes, output_size: 'Sequence[int]', spatial_scale: 'float' = 1.0). Treating as an Input.

[torch.onnx] Obtain model graph for `Sequential([...]` with `torch.export.export(..., strict=False)`...

[torch.onnx] Obtain model graph for `Sequential([...]` with `torch.export.export(..., strict=False)`... ✅

[torch.onnx] Run decomposition...

[torch.onnx] Run decomposition... ✅

[torch.onnx] Translate the graph into ONNX...

[torch.onnx] Translate the graph into ONNX... ✅

True

Concluding remarks and going further

We saw how the PyTorch Ascend plugin torch-npu allows us to migrate existing deep learning workloads originally optimized for NVIDIA GPUs to Huawei Ascend NPUs by simply adding an import statement to the existing code. While this approach may not work $100\%$ of the time especially if the code involves low-level performance optimizations tied to NVIDIA GPU hardware, it nonetheless greatly simplifies the migration path from NVIDIA to Ascend, allowing practitioners to focus on the interesting parts of the migration instead of being bogged down by repetitive grunt work.

Porting existing PyTorch deep learning workloads directly to Ascend is just the first step of the migration from NVIDIA’s proprietary CUDA ecosystem to Huawei’s open Ascend CANN ecosystem. To maximize the benefits of accelerating deep learning workloads on Ascend NPUs, a logical next step would be to port the entire codebase from PyTorch to the MindSpore framework lead by Huawei and governed by the MindSpore community. Fortunately, MindSpore provides the mindspore.mint API compatibility layer whose design and structure closely follows PyTorch’s conventions. This enables practitioners already familiar with PyTorch to migrate their codebase to MindSpore without re-architecting their pipelines and workflows.

I hope you enjoyed following through this notebook experiment as much as I did authoring it and stay tuned for updates ;-)

![[Valid RSS]](/assets/images/valid-rss-rogers.png)

![[Valid Atom 1.0]](/assets/images/valid-atom.png)